Trending Now

Enterprise

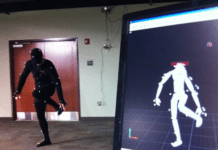

“Penubra Announces Joint Ventures on VR in Health Space”

Penumbra recently announced a huge investment that they made in the healthcare sector. They have managed to combine healthcare apps and virtual reality technology to improve the field as a whole.

“Pueblo Mall – Smartphone App Adds Holiday Shopping Fun”

Pueblo Mall, the vibrant heart and dynamic occupant of more than 80 world’s largest brands, offered its visitors to conjure up five...